Static keyword in C++ can often be a cause of confusion for many people because of its varied use cases. If you are one of them, hope this article helps you alleviate it.

In C++, static is used within three contexts : class, function and translation unit. And in each of these three contexts, they serve different purposes.

Within a class context, a class variable or a class method can be defined as static. A variable or a method declared as static is solely owned by the class. The class instances (class objects) do not get their own copy of the static members. So there is always only a single copy of a class’s static members which is in contrast to the other members of the class (each class instance gets its own copy of these members). Everyone with access to the static member always works on this single copy.

A static variable cannot be initialized within the class unless it is declared also as a constant. The following class declaration works because the static variable is also declared as a constant.

The only way to initialize a static variable is outside of the class using the following construct.static_variable_type class_name::static_variable_name = value. If you define your class in a header file (“.h” ) file, you should not add the initialization line for static variables in the same file. It should only be added in a (“.cpp”) file.

Note that a static variable can be accessed by the same class instances just like they access any other member variable. It is just that they cannot initialize the static variable. A class instance can even use the construct object_name. static_variable_name to access the static variable.

A static variable declared as public can be accessed anywhere in the program using class_name::static_variable_name. But If the static variable is declared as private, it cannot be accessed outside of the class. It is in such situations that the static methods come into the foray.

Static methods are used to modify/retrieve the value of the static variables. You can always access a private static variable by using class instances. But you need to go through the pain of creating an instance just to access the static variable. A much easier way is to use a static method. Like static variable, static methods also do not need a class instance (object) to become accessible.

A static method, as long as it is declared as public (which is usually the case), can be accessed anywhere in the program using class_name::static_method_name. Note that a static method can access only the static members of a class. You cannot use it to access non static members because of obvious reasons (Come on!! you can figure out the reason yourself!). Static methods can be defined within the class or outside of a class just like other class methods. Another important point to note is that a static method do not have the “this” pointer associated with it as it is not attached to any object.

Before reading the next two uses cases of static, I highly recommend you to go through my other article that discusses scopes and linkage in C++. You might not fully understand the below two use cases otherwise.

Within In a function context, a local variable declared as static will retain its value in memory until the end of the program. Note that the variable can still be accessed only from within its scope.

A local static variable declared within a function retains its value across multiple calls to that function. All the function calls will use the same variable instance. But you will not be able to access this static variable outside of the function. In the below example, static variable ‘count’ is common to all calls to the function ‘counter()’;

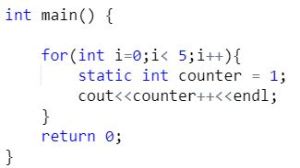

Similarly, as you can see in the below example, a local static variable declared within a loop retains its value across multiple iterations of the loop.

Within a translation unit context,

As discussed in the other article (here), a translation unit is the basic unit of compilation in C++. A global variable (which is available throughout the translation unit right from its point of declaration) has an external linkage by default and it is visible to the other translation units within the project.

But if you do not want the global variable to be visible to other translation units, you can declare it as static. Adding static in front of the global variable gives it an internal linkage and hence becomes inaccessible to the other translation units.

Leave a comment